How AI Optimizes Regression Test Coverage

AI is transforming regression testing by making it faster, smarter, and more efficient. Instead of running exhaustive tests on every code change, AI tools analyze commit histories, defect logs, and user behaviors to prioritize high-risk areas. This approach reduces testing time by up to 80% while improving accuracy. Key benefits include:

- Faster testing: AI-powered tools complete tests in minutes, not hours.

- Smarter coverage: Focuses on critical user flows and high-impact areas.

- Lower maintenance: Self-healing test scripts reduce manual updates.

- Cost savings: Catching bugs early saves up to 30x compared to fixing them in production.

To get started, set up tools like Ranger, configure your environment, and use AI-driven insights to refine your testing process. This method balances speed and quality, helping teams deliver reliable software on tight schedules.

Understanding Regression Test Coverage and AI

What Is Regression Test Coverage?

Regression testing involves re-running existing tests after code changes to ensure that previously functional features remain unaffected. It acts as a safety net, catching any unintended issues that might arise from updates. Given how interconnected modern applications are, even a small change in one part of the system can lead to unexpected problems elsewhere.

Regression test coverage is a way to measure how effectively your test suite evaluates the application. The goal isn’t just to run tests but to ensure they target the critical areas users interact with. Key metrics include:

- Code coverage: Highlights untested sections of the code.

- Functional coverage: Confirms that essential user workflows are tested.

- Branch coverage: Ensures all possible execution paths in decision-making structures are verified.

Why does this matter? The cost of poor software quality is staggering - estimated to have impacted the U.S. economy by $2.41 trillion in 2022. CircleCI’s analysis captures this perfectly:

"A bug caught in CI costs a developer a few minutes and a one-line fix. The same bug caught in production costs an incident ticket, a war room, a hotfix branch, a re-deployment, and whatever customer trust was lost in between".

These metrics lay the foundation for AI’s transformative role in refining regression testing.

How AI Improves Regression Testing

AI takes regression testing to a new level by turning it into a more focused and efficient process. Instead of treating all tests equally, machine learning models sift through commit histories, defect logs, and change patterns to pinpoint "hotspots" - areas most prone to bugs. These high-risk tests are prioritized for faster feedback. Additionally, impact analysis maps out how changes ripple through microservices and identifies which tests are relevant, avoiding the inefficiencies of running unnecessary tests. This approach keeps pipelines moving smoothly and avoids the delays common in traditional CI/CD workflows.

AI also shines at uncovering gaps in testing. Automated tools analyze applications to learn user behaviors and generate test cases for scenarios that manual testing might overlook. Alexia Hope from Research Snipers sums it up well:

"The goal is not to conduct additional tests. Rather, the goal is to run the right ones at the right time".

Lastly, AI-powered self-healing mechanisms reduce the burden of maintaining test scripts, ensuring they adapt automatically to changes.

sbb-itb-7ae2cb2

Setting Up AI Tools for Regression Testing

Prerequisites for AI-Powered Test Optimization

Before you dive into using AI for regression testing, make sure you’ve got the technical groundwork in place. Start by installing Node.js v20+. If you're working on a Linux system, you’ll also need Xvfb to support the headless Chromium browser.

Your version control system setup is just as crucial. In Continuous Integration (CI), ensure a full Git history is available by setting fetch-depth: 0. This allows the AI agent to generate precise diffs. For authentication, you have two main options: capture session cookies using a one-time CI Profile Creation or provide login instructions for dynamic token generation.

Next, grant your AI coding agent (e.g., Claude Code) the necessary permissions to execute tasks within your project directory. Update the agent’s settings file (e.g., .claude/settings.local.json) to enable commands like Bash, Read, Glob, and Grep. These permissions are essential for the agent to manage files and execute scripts effectively.

Once these steps are complete, you’re ready to configure Ranger for automated testing.

Configuring Ranger for Automated Testing

With your environment ready, it’s time to set up Ranger to streamline your testing process. Begin by creating a CI profile that captures your environment’s configurations. Run this command in your project’s root directory:

ranger profile add <name> --ci

This command securely captures and encrypts your browser’s authentication cookies. The encrypted data is stored in the .ranger/ci/ directory and should be committed to your repository. Decryption will require a valid RANGER_CLI_TOKEN.

For dynamic environments, like Vercel preview deployments, use the ${VAR_NAME} syntax in your profile’s baseUrl configuration. This ensures that environment variables are resolved during runtime. If your deployment includes additional security measures, you can set up a custom header:

ranger profile config set preview header.x-vercel-protection-bypass '<token>'

After setting this up, run the following command:

ranger skillup

This command installs markdown files that guide your AI coding agent on how to perform feature reviews and browser verifications. Once configured, Ranger offers a Feature Review Dashboard. This dashboard includes screenshots, video recordings, and Playwright traces for your team to review. Verified features can then be converted into permanent tests with just one click.

Implementing AI-Driven Test Selection and Prioritization

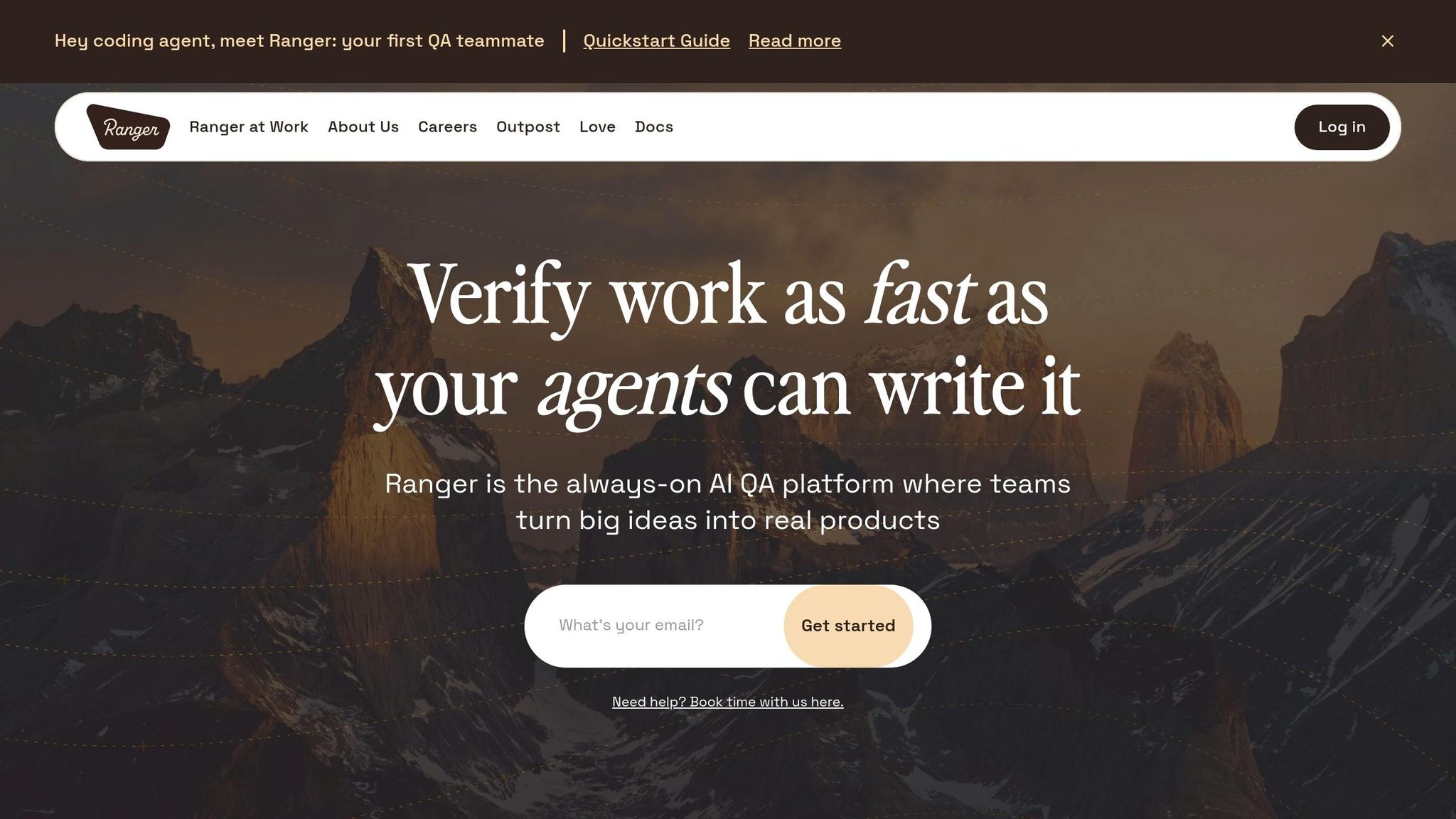

Full Regression vs AI-Optimized Testing: Key Differences

Selecting and Prioritizing Tests with AI

Once Ranger is set up, you can leverage AI to select and prioritize regression tests based on code changes. This type of impact analysis can reduce regression testing time by 60–80%, all while improving test coverage.

Ranger operates through feature reviews. After a coding agent completes a feature, running ranger go activates AI browser agents to validate user flows. These agents focus on verifying high-impact areas, ensuring critical functionality works as intended, rather than treating all tests with the same level of importance.

"Ranger functions like an AI-driven QA team: once a coding agent completes work, local browser agents verify that user flows function as intended." – Ranger Feature Review

The AI prioritizes verification by analyzing changes in the codebase. If issues are detected during verification, Ranger flags the failures and reports them back to the coding agent. This creates an iterative feedback loop where bugs are addressed during development, saving significant costs - up to 30 times less than fixing issues in production. By focusing on what matters most, Ranger helps refine test suites, eliminating redundancies and closing gaps in coverage.

Removing Redundant Tests and Closing Coverage Gaps

Ranger doesn’t stop at prioritizing tests - it also works to streamline your test suite. By removing redundant tests and ensuring each one adds distinct value, Ranger ensures your testing efforts remain efficient. Each verification cycle gathers evidence for review, which makes it easy to convert verified features into permanent tests with minimal effort.

For example, once a feature passes verification, it can be turned into an end-to-end test with just a single click, helping to address any coverage gaps seamlessly. Additionally, Ranger’s AI-driven visual testing capabilities can cut false positives in UI regression testing by up to 80%, freeing up your team to focus on real issues instead of chasing phantom bugs.

To further simplify maintenance, Ranger incorporates self-healing mechanisms for brittle UI tests. This reduces the need for constant updates and allows your team to concentrate on improving core functionality.

Full Regression vs. AI-Optimized Testing Comparison

The table below highlights the key differences between traditional full regression testing and Ranger's AI-optimized approach:

| Feature | Full Regression Testing | AI-Optimized Regression (Ranger) |

|---|---|---|

| Execution Time | Can take hours or even days to complete | Often completed in minutes or under an hour |

| Coverage Depth | Limited by time and manual script creation | Includes edge cases and user flows |

| Risk Focus | Treats every test case as equally important | Focuses on high-impact and high-risk areas |

| Maintenance | High; UI changes frequently break static scripts | Low; self-healing adapts to changes automatically |

| ROI Impact | High resource drain and slower release cycles | Cuts testing time by 60–80% |

"Embracing a focused and selective test case prioritization approach is crucial for optimizing testing resources, accelerating time-to-market, and elevating overall software quality." – Cristiano Caetano, Head of Growth at Smartesting

Monitoring and Improving Coverage with AI Analytics

Using AI for Real-Time Coverage Insights

Once you've optimized your test selection, keeping an eye on your regression coverage ensures it stays effective. Tools like Ranger make this easier by offering real-time risk signaling. It analyzes execution results and unresolved issues, giving you clear insights into release readiness while flagging risks before deployment happens.

Ranger's AI-powered dashboard serves as a central hub for both manual and automated tests. This means you can easily track and address any emerging coverage gaps. And with integrated Slack notifications, your team gets immediate alerts about coverage failures, so nothing slips through the cracks. By using machine learning to dig into historical test results and recent code changes, the system identifies areas most likely to fail during the current testing cycle.

Another standout feature is its ability to filter out visual noise. The AI can tell the difference between minor cosmetic changes and real UI bugs, cutting down false positives in regression testing by up to 80%. This ensures your team spends time fixing real defects rather than chasing down non-issues.

Self-Healing and Continuous Test Maintenance

Test maintenance can be a major time sink, eating up about 40% of a QA team's efforts. Ranger tackles this with self-healing capabilities, automatically updating broken selectors so your test suite stays effective without constant manual intervention.

As your application evolves, this automated maintenance prevents coverage from falling behind. When code changes occur, Ranger updates only the tests that need it, keeping things lean and efficient. Teams using AI-driven testing have reported cutting regression cycle times by 40–70%, all while maintaining high-quality releases.

This level of automation strengthens your regression suite, ensuring it remains reliable and aligned with Ranger's commitment to efficient, dependable testing.

Measuring the Impact of AI Optimization

Key Metrics to Track Optimization Success

When using AI to enhance your regression testing, it's essential to measure its impact with clear metrics. Start with test execution cycle time - this reveals how much faster your regression suite runs after leveraging AI for test selection and prioritization. Many teams adopting AI-driven methods report cutting execution time by 60%–80%.

Another critical metric is the defect detection rate, which shows how effectively AI identifies key bugs compared to traditional methods. Alongside this, monitor escaped defects - these are the bugs that slip through to production. Fixing bugs in production can cost up to 30 times more than addressing them during development. By using AI-driven regression testing, organizations often aim to reduce production bugs by at least 30%.

It's also worth evaluating the savings in test maintenance brought by AI self-healing tools. To calculate your overall ROI, compare the cost of AI tools against the potential savings from catching defects earlier - those savings can be substantial, given the 30x cost difference.

Now, let’s explore how Ranger’s hosted infrastructure supports scalability for growing testing needs.

Using Ranger's Hosted Infrastructure for Scalability

As testing demands increase, having a scalable infrastructure is crucial. Ranger’s hosted infrastructure offers centralized visibility, traceability, and synchronized CI/CD regression workflows. This setup eliminates the hassle of managing additional servers or worrying about capacity, making it ideal for large-scale testing.

When running hundreds or even thousands of tests, the scalability of Ranger’s cloud-based execution stands out. With thread doubling, test speed can increase by up to 5x, significantly improving ROI. This setup allows teams to focus on enhancing quality strategies instead of managing test environments. For organizations aiming for a 40–70% reduction in test execution cycle time, while maintaining thorough coverage of critical user paths, this approach is a game-changer.

Conclusion

AI-powered regression testing is reshaping the way software teams handle quality assurance. By leveraging intelligent test selection, automated prioritization, and self-healing capabilities, teams can reduce their regression testing scope by about one-third while still catching nearly 95% of defects. Rather than replacing human expertise, this approach allows QA professionals to move beyond repetitive tasks and focus on areas requiring deeper analysis, like exploratory testing, edge cases, and complex problem-solving.

Success comes from striking the right balance between automation and human oversight. AI is great at managing repetitive, high-priority test cases and adapting to code changes, but manual testing remains critical for evaluating usability and addressing nuanced scenarios.

Platforms like Ranger make this balance achievable. With AI-driven test creation and human-reviewed test code, Ranger integrates seamlessly with tools like Slack, GitHub, and CI/CD pipelines, delivering real-time testing insights without interrupting workflows. Its scalable, hosted infrastructure means teams can focus on delivering features faster without worrying about managing testing environments.

To get started, identify your high-traffic user flows and modules with a history of defects, then apply AI optimization to these key areas. Track metrics like test execution time, defect detection rates, and escaped defects to measure the impact. Over time, as AI tools learn from each test cycle, they become more precise and efficient, improving coverage and reducing effort.

The future of regression testing doesn’t force a choice between speed and quality. Instead, it blends the best of both through smart automation and human expertise.

FAQs

How does AI decide which regression tests to run after a commit?

AI selects which regression tests to execute by examining code changes, usage patterns, and historical defects to pinpoint high-risk areas. Rather than running the full test suite, it concentrates on tests that target these specific areas. This approach minimizes redundant testing and speeds up feedback, making the process more efficient.

What data do I need for AI to optimize my regression coverage?

To get the most out of regression coverage, AI depends on several key data points: code changes, test relevance, and bug reports. It starts by analyzing recent code updates to determine which tests are most applicable. Past test results also play a role, especially in identifying and managing flaky tests that might skew outcomes.

Incorporating information like bug classification, severity, and priority allows AI to zero in on the most critical areas. This approach not only cuts down on running unnecessary tests but also boosts overall testing efficiency.

How do self-healing tests work, and when can they fail?

Self-healing tests leverage AI to identify and repair broken test scripts when changes occur in the UI or DOM. By analyzing contextual signals - such as visual cues, element attributes, and historical trends - they can automatically adjust locators or elements to keep tests functional.

However, these tests can still encounter issues. Major UI overhauls or unclear signals can make it difficult for the AI to adapt, resulting in outdated test scripts and potential errors.

%201.svg)

%201%20(1).svg)