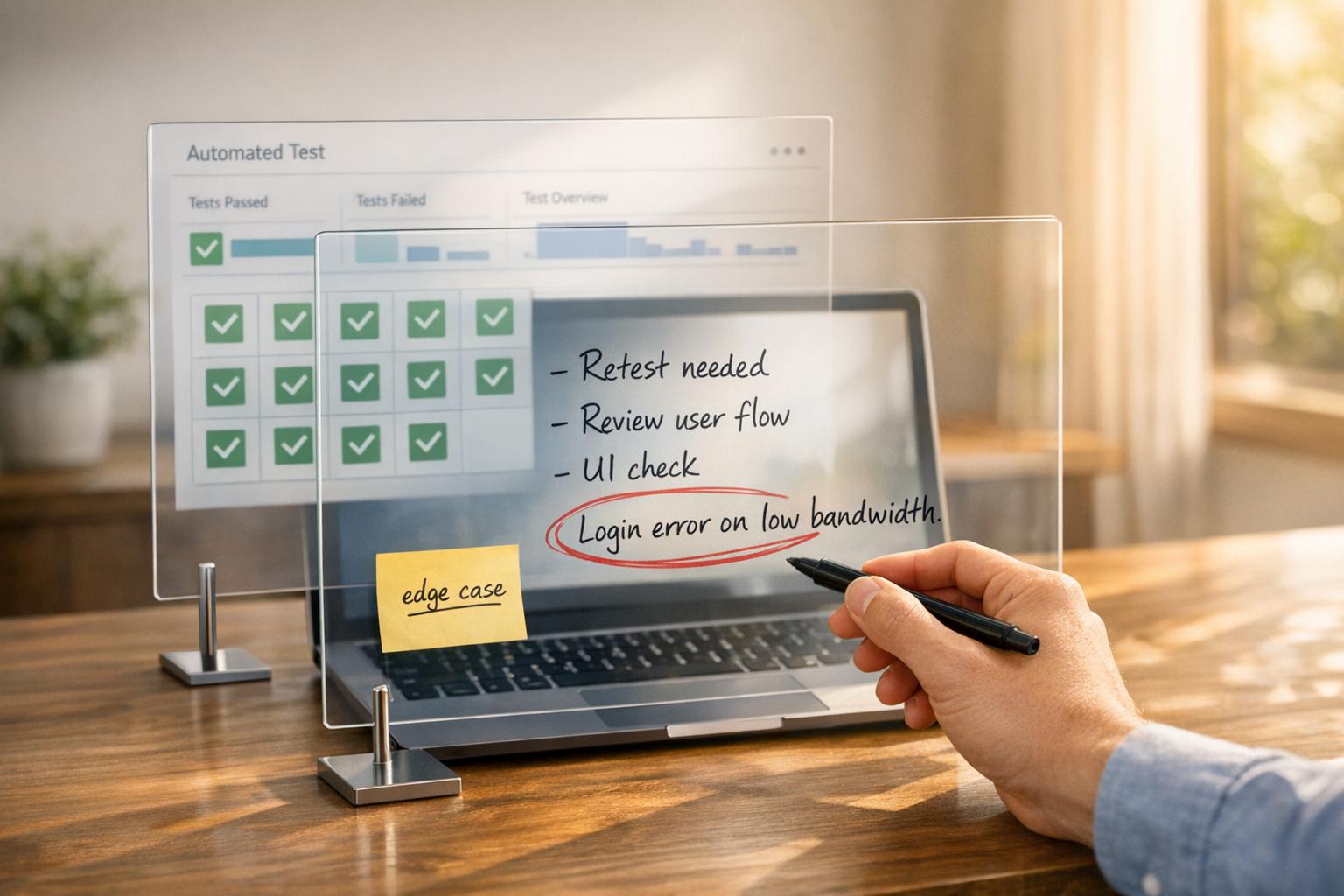

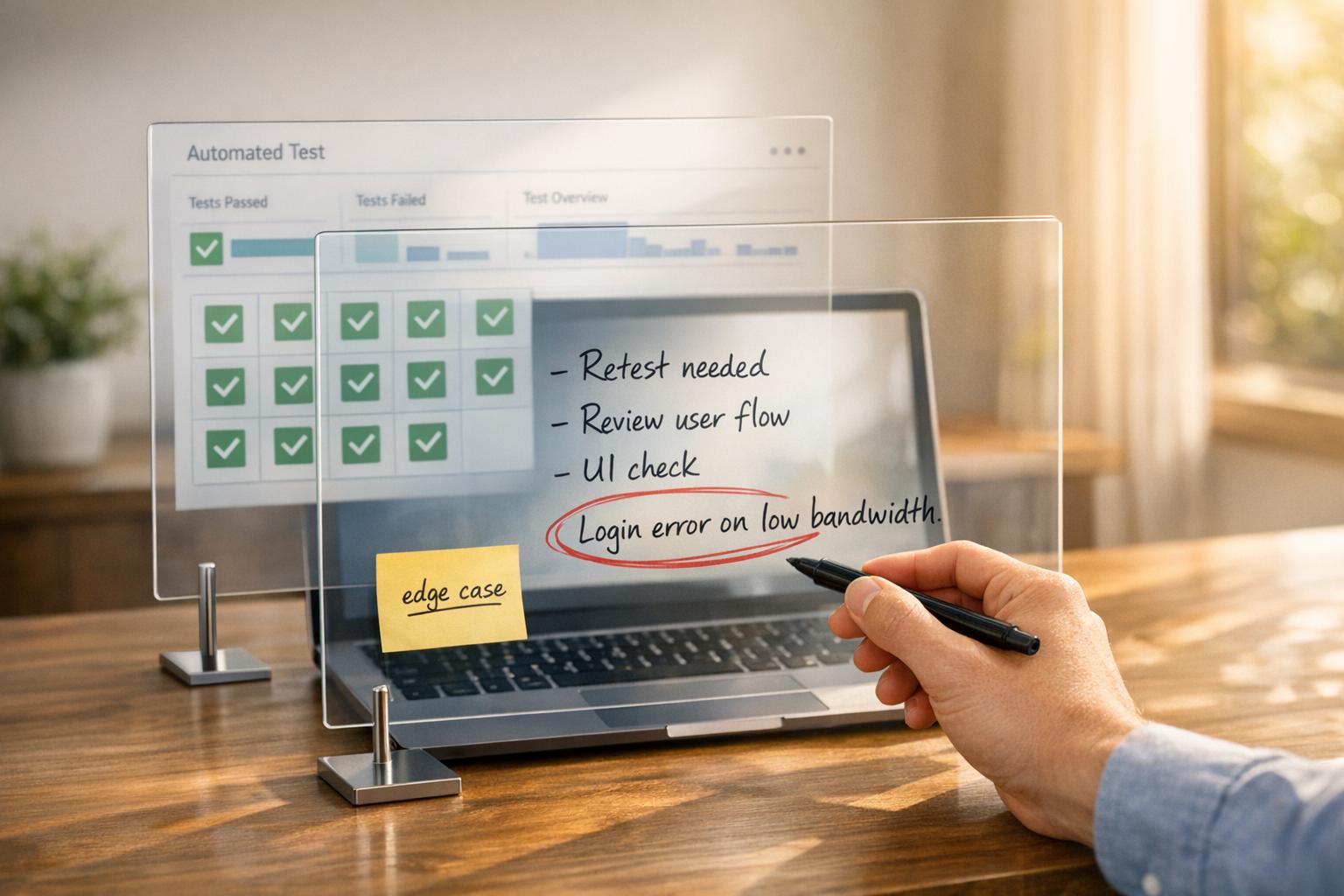

Balancing QA Automation with Human Input

Automation speeds up testing, but human testers catch what machines miss. This is the core challenge in quality assurance (QA): finding the right mix of automated tools and manual testing to ensure both speed and accuracy. Automation handles repetitive tasks like regression testing in minutes, while human testers excel at spotting subtle, complex bugs and ensuring user experience meets expectations.

Key takeaways:

- Automation: Fast, scalable, and reliable for repetitive tasks but struggles with context and exploration.

- Human Input: Slower but essential for uncovering edge cases, assessing usability, and catching nuanced errors.

- The Balance: Combining both approaches reduces costs, improves coverage, and enhances product quality.

The "automation paradox" highlights the need for human judgment as automation increases. While tools like AI-powered QA reduce maintenance and boost efficiency, they can't replace the critical thinking humans provide. A hybrid strategy - where automation handles routine tasks and humans focus on complex scenarios - delivers the best results.

1. QA Automation

Efficiency

Automated testing takes on repetitive tasks that often dominate QA teams' schedules. For instance, regression testing alone can eat up 40–50% of a QA team's time, making it an ideal candidate for automation. Once scripts are in place, they can run thousands of tests across different browsers and devices - something manual testing simply can't match.

Teams that incorporate AI into their CI/CD pipelines have reported 30–40% faster release cycles compared to those relying heavily on manual testing. A Senior DevOps Engineer from North America shared, "AI QA reduces regression cycles from days to hours, reshaping our quality paradigm." Beyond just speed, automation ensures broad, error-free test coverage.

Quality Assurance Coverage

Automation shines when it comes to scale and consistency. It can reliably test login flows, payment systems, and core business logic across countless scenarios without the risk of human fatigue or oversight. For tasks like validating thousands of API responses or checking database states, automation minimizes the errors that can creep into repetitive manual work.

Performance and load testing are other areas where automation is indispensable - manually simulating thousands of users at once just isn't practical. That said, automation has its limits, as it strictly follows pre-programmed scripts and can't adapt to exploratory testing nuances.

Cost and Maintenance

While automation offers long-term benefits, the initial investment is steep. Teams must allocate resources for tools, environment setup, and script development. Additionally, 55% of teams using open-source frameworks like Selenium or Cypress report spending at least 20 hours a week maintaining their automated tests. Maintenance can account for up to 60% of a QA team's efforts.

Interestingly, about 80% of effective testing can often be achieved with just 20% of the effort, but automating the rest can demand disproportionate resources. However, AI-powered tools with self-healing capabilities are starting to change the game. These tools could slash annual maintenance expenses from $676,000 to as little as $7,800 for a test suite of around 2,800 cases.

While maintenance remains a challenge, automation's ability to consistently identify deviations adds a layer of reliability to testing efforts.

Error Detection Capabilities

Automation is precise when it comes to identifying functional deviations from expected results. AI-powered tools can even predict high-risk areas and flag issues, but they lack the ability to interpret business-critical nuances or user intent. Currently, 68% of development teams rely on AI-driven tools for tasks like regression, smoke, or risk-based testing.

However, automation alone can't catch everything. For example, it might miss a payment flow that unintentionally exposes sensitive data or fail to notice the subtle sluggishness of an interface. As Jose Amoros points out, "AI excels at pattern recognition and repetitive test execution but fails at understanding context, user intent, and business-critical nuances that determine true software quality."

Combining automation with human oversight can bridge this gap. Tools like Ranger offer hybrid solutions that leverage automation's reliability while incorporating human insight, creating a well-rounded QA strategy. Together, these approaches enhance efficiency and ensure robust quality assurance.

sbb-itb-7ae2cb2

2. Human Input

Efficiency

Manual testing tends to move at a slower pace compared to automation. This gap becomes especially noticeable during repetitive regression testing. However, during active development - when things like button labels or layouts are constantly changing - human testers can adapt quickly without the hassle of updating scripts. For projects with a short timeline or features that evolve rapidly, manual testing can actually be quicker to set up than automation, which risks breaking with every adjustment. This adaptability allows human testers to cover a wider range of scenarios, showcasing their ability to provide deeper insights.

Quality Assurance Coverage

Humans excel at spotting issues that automation simply overlooks. Exploratory testing, for example, relies on intuition and creative problem-solving to uncover bugs that no pre-written script could anticipate. A manual tester might experiment by interrupting a checkout process, entering unexpected data, or combining features in unconventional ways. Beyond technical bugs, they also evaluate whether an interface feels user-friendly and ensure accessibility and localization standards genuinely meet user expectations. As Jose Amoros from TestQuality puts it:

"AI perceives structure but not experience".

Error Detection Capabilities

Manual testers bring a level of contextual understanding that automation can't replicate. For instance, they can spot scenarios where a payment flow technically works but inadvertently exposes sensitive information - a subtle yet critical security oversight that automated checks might miss.

"Automated tests are only as good as the engineer who wrote them".

- Richard Bradshaw, CEO of Ministry of Testing

Experienced testers also use their domain expertise to verify business logic and compliance requirements. They often catch issues where the software performs as intended but fails to align with the business's actual needs. This ability to think critically and challenge assumptions gives human testers a unique edge.

Cost and Maintenance

Unlike automated testing, which requires ongoing upkeep, manual testing demands little upfront investment and doesn’t rely on specialized tools. That said, it can become time-consuming, especially for repetitive tasks where human fatigue might lead to errors. The key advantage here is flexibility: human testers can quickly adapt to UI changes, sidestepping the maintenance headaches that often plague automated scripts. For teams working on unstable features or early-stage products, this flexibility helps avoid the technical debt that comes with constantly updating fragile automation. By complementing automation's reliability with their adaptability, manual testers play a crucial role in creating a balanced quality assurance strategy.

QA Manual And Automation Testing- The Power Duo, Why Both Are Needed

How Human-AI Collaboration Works in QA

The most effective QA strategies leverage AI as a tool to amplify human expertise. In this hybrid model, AI takes on tasks like regression testing and anomaly detection, while human testers focus on high-risk scenarios that demand contextual understanding and judgment. This setup allows teams to scale their testing efforts without losing the critical thinking needed to catch subtle, complex bugs. A great example of this balance is seen in Ranger's integrated testing workflows.

Ranger employs a "cyborg" model where AI generates initial Playwright test code, which human testers then review for clarity and reliability. The platform also automatically weeds out flaky tests, enabling engineering teams to focus on genuine issues. With seamless integration into tools like Slack and GitHub, Ranger provides real-time updates within teams' existing workflows.

This human-in-the-loop approach tackles a major shortcoming of autonomous AI: while AI is excellent at spotting patterns, it falls short when it comes to understanding user intent or grasping business-critical nuances. That’s where human judgment steps in to fill the gap.

Additionally, this collaboration creates a feedback loop that improves AI performance over time. When human testers reject inaccurate AI recommendations or flag missed scenarios, that input trains the AI to make better decisions in future iterations. Jose Amoros from TestQuality sums it up perfectly:

"The future of QA belongs to teams that treat AI as an amplifier of human expertise rather than a replacement for critical thinking."

By blending these strengths, teams can boost release agility by as much as 30%.

Real-world examples back this up. In 2025, Jonas Bauer, Co-Founder and Engineering Lead at Upside, leveraged Ranger's automated test flows to achieve same-day release cycles - without sacrificing quality. This demonstrates how combining automation with human oversight not only ensures higher quality but also speeds up delivery.

Pros and Cons

QA Automation vs Human Testing: Comprehensive Comparison of Efficiency, Coverage, Cost and Error Detection

The table below breaks down the advantages and challenges of QA automation and human input, highlighting their unique strengths and limitations.

| Aspect | QA Automation Pros | QA Automation Cons | Human Input Pros | Human Input Cons |

|---|---|---|---|---|

| Efficiency | Executes tests quickly; scales across thousands of scenarios | High initial setup costs; maintenance demands 30% to 50% of team capacity | Adapts flexibly to design changes; handles context-sensitive tasks effectively | Slower execution; limited by human attention and prone to fatigue |

| Quality Assurance Coverage | Provides extensive coverage for regression and load testing | Limited to pre-programmed scenarios; struggles with exploratory testing | Excels at uncovering edge cases; identifies 33% of complex bugs through exploration | Coverage can be inconsistent; human error rates range from 3% to 5% |

| Cost and Maintenance | Reduces long-term costs for repetitive tasks; AI tools lower maintenance to 5% of effort | Tests can be fragile, with 23% becoming flaky each quarter; traditional maintenance costs around $676,000 annually for 2,800 tests | No upfront investment needed for specialized tools | Higher ongoing labor expenses; salaries range from $60,000 to $100,000 per engineer |

| Error Detection Capabilities | Consistently detects predictable errors; maintains error rates below 1% for repetitive tasks | Misses context-specific issues, such as confusing error messages or illogical UX | Judges context better, spotting subtle UX flaws | Prone to human error and fatigue during repetitive tasks |

These numbers emphasize the importance of combining both approaches. Traditional automation delivers an average ROI of 56%, while AI-native testing can skyrocket ROI to 1,160% by cutting maintenance time from 260 hours per sprint to just 3 hours. However, automation alone can't address everything. While it excels at catching minor issues, human testers are better at identifying major shifts in functionality or user experience.

The cost difference is another key factor. Fixing a defect in production costs an average of $4,467, compared to just $89 if caught earlier. This stark contrast highlights why a balanced approach - leveraging both automation and human insight - is not only technically effective but also financially smart.

Conclusion

Automation and human testing each bring unique strengths, but neither can fully replace the other. While automated tests are fast and efficient, manual testing uncovers subtle, nuanced issues that automation often overlooks. As Richard Bradshaw aptly states, "Automation is not curious, it doesn't explore, it's deterministic... automated tests are only as good as the engineer who wrote them".

The key lies in combining these strengths effectively. Successful QA strategies delegate repetitive tasks - like regression checks, API validation, and smoke tests - to automation, while leveraging human expertise for exploratory testing and validating complex logic. Hybrid models help balance the challenges of maintaining automated tests. For instance, tools like Ranger integrate AI-driven test creation with human oversight, ensuring reliability while freeing engineers to focus on feature development. Ranger’s integrations with platforms like Slack and GitHub further simplify test maintenance by delivering real-time alerts when issues arise.

The future of QA will likely hinge on human-in-the-loop AI. Gartner forecasts that 90% of engineers will rely on AI assistants by 2028. As Jose Amoros puts it, "The future of QA belongs to teams that treat AI as an amplifier of human expertise".

FAQs

What should we automate first in QA?

Automating repetitive, time-draining tasks like regression tests, API validations, and data integrity checks can make a huge difference. These tasks are not only prone to human error but also benefit from the speed and consistency automation offers. By handling these processes automatically, testers can focus their energy on exploratory and usability testing, areas where human insight is irreplaceable.

To get the most out of automation, focus on high-impact and frequently executed scenarios, such as critical workflows. This approach boosts efficiency while maintaining quality. Remember, automation works best as a partner to manual testing, which is still essential for tackling subjective and complex tasks that require human judgment.

When is manual testing better than automation?

Manual testing works best when human judgment or visual evaluation is necessary. This approach is particularly useful for tasks like assessing usability, spotting user experience problems, or reviewing design and accessibility elements.

It’s also a good choice for occasional testing, early development phases, or when experimenting with new features. In these cases, manual testing provides the flexibility and quick feedback needed without the extra effort of building and maintaining automated scripts.

How do we reduce flaky tests and automation maintenance?

To make automated tests more reliable and easier to maintain, focus on a few key practices:

- Use dynamic waits instead of fixed delays: Fixed delays can lead to flaky tests when application performance varies. Dynamic waits ensure your tests adapt to real-time conditions.

- Implement stable test IDs: Avoid relying on fragile CSS selectors, which can break with minor UI updates. Stable test IDs provide a more dependable way to locate elements.

- Ensure tests are independent: Each test should set up and tear down its own data without depending on other tests. This reduces the risk of cascading failures and makes debugging simpler.

By adopting these strategies, you can significantly improve the reliability and upkeep of your automated testing suite.

%201.svg)

%201%20(1).svg)